Introduction

The arrival of ChatGPT and other advanced OpenAI language models has revolutionized the world of chatbots and conversational AI. These important language models have opened up new possibilities for creating intelligent and interactive conversational interfaces. still, one of the challenges faced in chatbot development has been effectively managing discussion memory and environment. In this composition, we will explore the significance of discussion memory, understand how to use ChatGPT APIs, and claw into practical exemplifications and strategies for managing discussion environment.

Understanding discussion Memory

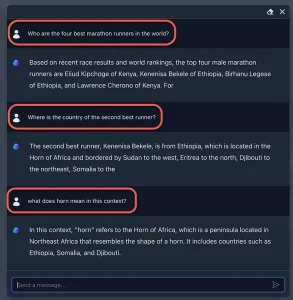

discussion memory, also known as conversational environment, refers to the capability of a chatbot to retain and relate back to former relations during a discussion. Traditionally, this has been a grueling aspect of chatbot development. Let’s illustrate this with an illustration discussion

stoner What is the rainfall like moment?

Chatbot It’s sunny and warm.

stoner Should I bring an marquee?

Chatbot No, you will not need an marquee.

In this discussion, the alternate and third questions are contextually implicit, pertaining back to the information handed in the first question. Effective discussion memory enables chatbots to understand and respond meetly to similar contextually sensitive questions.

Using ChatGPT APIs with LLM Affiliated Tools

ChatGPT models, similar as gpt- 4, gpt-3.5-turbo, gpt- 4 – 0314, and gpt-3.5-turbo-0301, can be penetrated through APIs with just a many lines of law. These language models have important capabilities, including many- shot literacy and summarization, which can prop in managing discussion memory effectively. By using these tools, chatbots can more understand and respond to contextual questions, thereby enhancing the overall stoner experience.

Practical exemplifications of Context Management

Let’s explore some practical exemplifications of effectively managing discussion environment with ChatGPT APIs

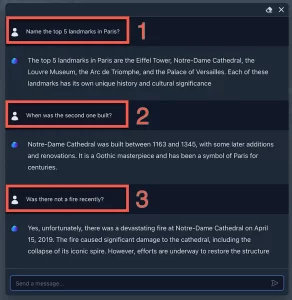

Using previous Dialog Turns By incorporating previous dialog turns into the discussion, the chatbot can understand the environment of the current question. This approach allows for answering contextual questions, making the discussion more natural and engaging.

discussion Shortening Since models have token limits, exchanges may need to be docked. A rolling log of the discussion history, containing only the most recent dialog turns, can be submitted to overcome this limitation.

The significance of Conversational History

It’s essential to note that AI language models like ChatGPT have no memory of former requests or relations. therefore, all applicable information must be supplied via the discussion. By understanding the significance of conversational history, inventors can design chatbots that deliver further contextually applicable responses.

Challenges with Model Memory and Dialogue State

Despite the advancements in AI language models, challenges remain in effectively managing discussion memory. Discovery tools for relating AI- generated content may still induce false cons, making it delicate to determine if a response is from the model or a mortal. also, icing that the model responds directly and contextually requires careful design and perpetration.

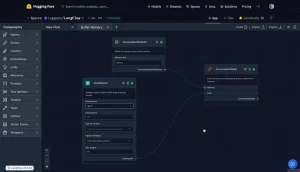

spanning Conversation Memory No- Code Generative App Development

To make discussion memory manageable and scalable, inventors can borrow a no- law generative app development approach. Dividing programming tasks into different factors, similar as Conversation Memory, can streamline the development process and ameliorate the effectiveness of managing environment.

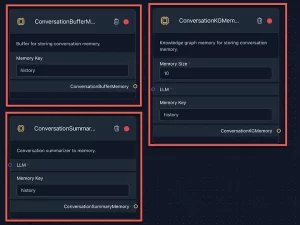

Understanding the factors of Conversation Memory

Key factors of discussion memory include a buffer for storing discussion memory, a discussion summarizer to memory, and a knowledge graph memory for storing contextual information. enforcing these factors can significantly enhance the chatbot’s capability to retain and recall once relations.

A Basic General Chatbot with Memory

Let’s explore a introductory general chatbot equipped with memory, allowing for nebulous questions

stoner What is your favorite color?

Chatbot I like blue.

stoner How about green?

Chatbot Yes, green is a beautiful color too!

In this illustration, the chatbot refers back to the stoner’s former question and acknowledges the environment.

Contextually Sensitive Follow- Up Questions

Contextual perceptivity is pivotal in discussion memory operation. When druggies ask follow- up questions, the chatbot should be suitable to fete the environment and give applicable responses. Understanding the nuances of conversational environment enhances the chatbot’s overall performance and stoner satisfaction.

Conclusion

Effectively managing discussion memory and environment is essential in creating intelligent and stoner-friendly chatbots. With the vacuity of ChatGPT APIs and LLM related tools, inventors can work advanced language models to achieve contextually applicable relations. By understanding the challenges and practical approaches to environment operation, we can make chatbots that deliver more individualized and engaging exchanges, enhancing the overall stoner experience.